These days it’s probably easier to find a winged unicorn than a software organization who hasn’t adopted Agile.

We go agile since we expect a lot of benefits. We expect higher productivity, better quality and we’re also sold on the idea that we’re now able to react and adapt faster to change. However, that’s not necessarily the outcome.

In this article we’ll focus on one of the central, and often neglected, practices or agile methodologies: Retrospectives. You’ll see why retrospectives get abandoned, the unfortunate consequences, and how you can improve them by a software evolutionary approach that uncovers the impact your work has on the system as a whole.

From values to ceremonies

As an organization decides to go agile two things happen. First we learn that cultural change is hard. That means that many of the principles and values that agile methodologies build upon are either ignored or slow to spread throughout the organization. However, superficial change is much easier. The consequence is that ceremonies like stand-ups, story point estimation, retrospectives, etc take off immediately.

Of these ceremonies, retrospectives are the most important; No matter what methodology you use, agile or not, taking a step back, reflecting on what you do and try to improve is fundamental. Unfortunately, retrospectives are also the first practice that gets abandoned as the agile train rolls on in an organization.

The most common reasons that we abandon retrospectives after a few iterations is that:

- It’s easy to do so since retrospectives are a team internal practice with no external deliverable. That is, no one will shout at you if you drop retrospectives. That alone makes retrospectives the first victim of a deadline.

- The discussions in a retrospective tends to stabilize after a few iterations/sprints. Without any new issues, the team perceives no real value in rehashing the same topics over and over again.

It doesn’t have to be this way, so let’s explore a technique that helps you get more focused and useful retrospectives.

Focus your retrospective on the system you build

Retrospectives tend to focus on what’s visible. In most retrospectives that I’ve taken part in, we used to discuss the outcome of the planning, suggested improvements to our way of working, and of course why we failed to meet the goal of the sprint. Again.

However, there’s one thing that’s sadly absent: the code itself. A retrospective should discuss the system the team tries to build. After all, that’s why we do all these improvements. We want to be more efficient at writing high quality code, work better together as we make our development efforts easier and more predictable over time.

A number of years ago I started to apply the ideas that eventually became Your Code as a Crime Scene to retrospectives. And we found that approach so valuable that we decided to add tool support for it. Let’s see how it works.

An automated analysis of your behavioral patterns in a sprint

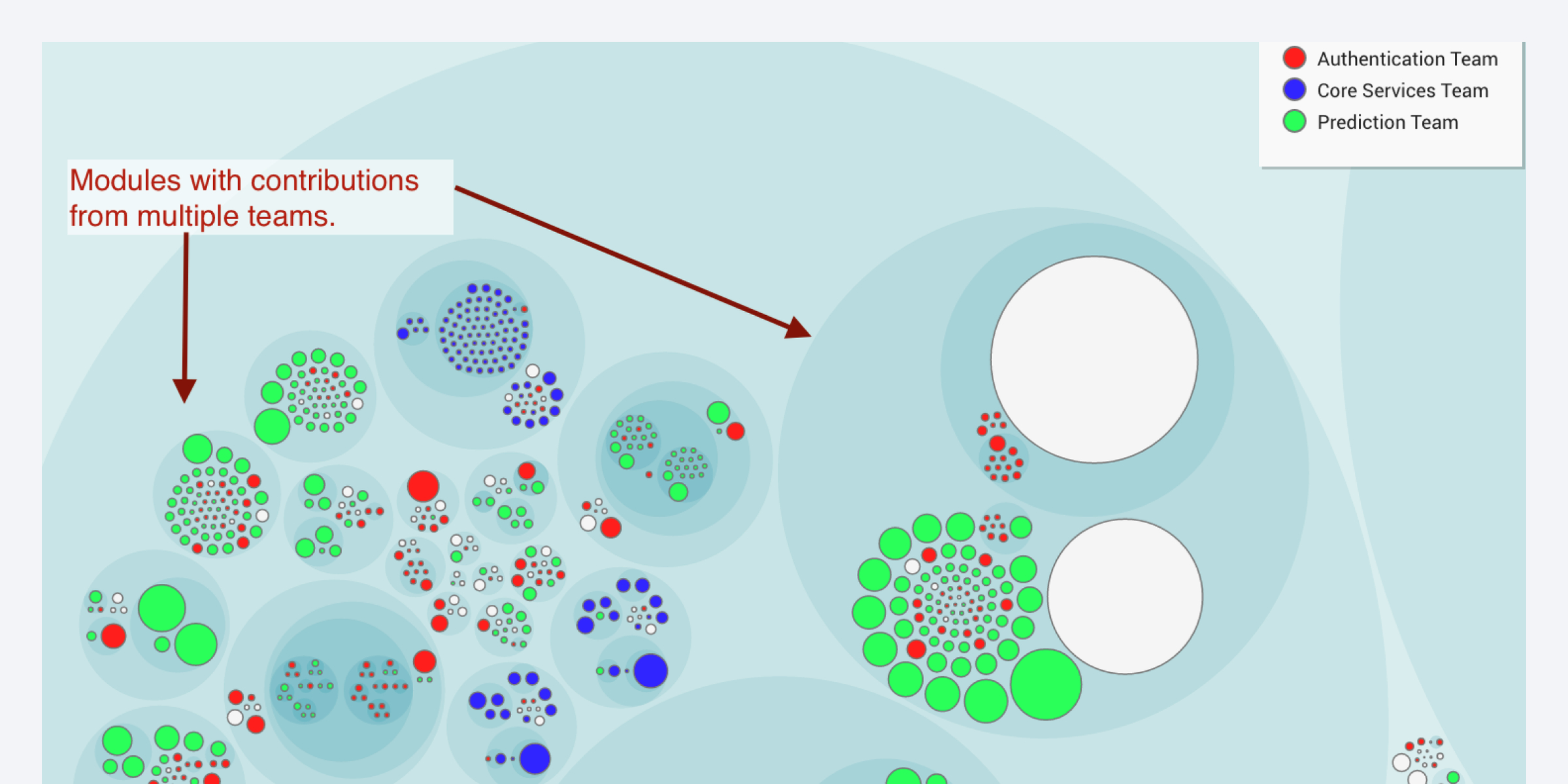

CodeScene is typically used to run analyses at regular intervals. However, in the latest version there’s a new choice: You can now request an analysis tailored to a retrospective, as shown in the figure below.

Once you press that blue Retrospective button, CodeScene runs an analysis on your team’s development activity over the last sprint. That means you get data on how your development efforts, features and stories actually impacted your codebase.

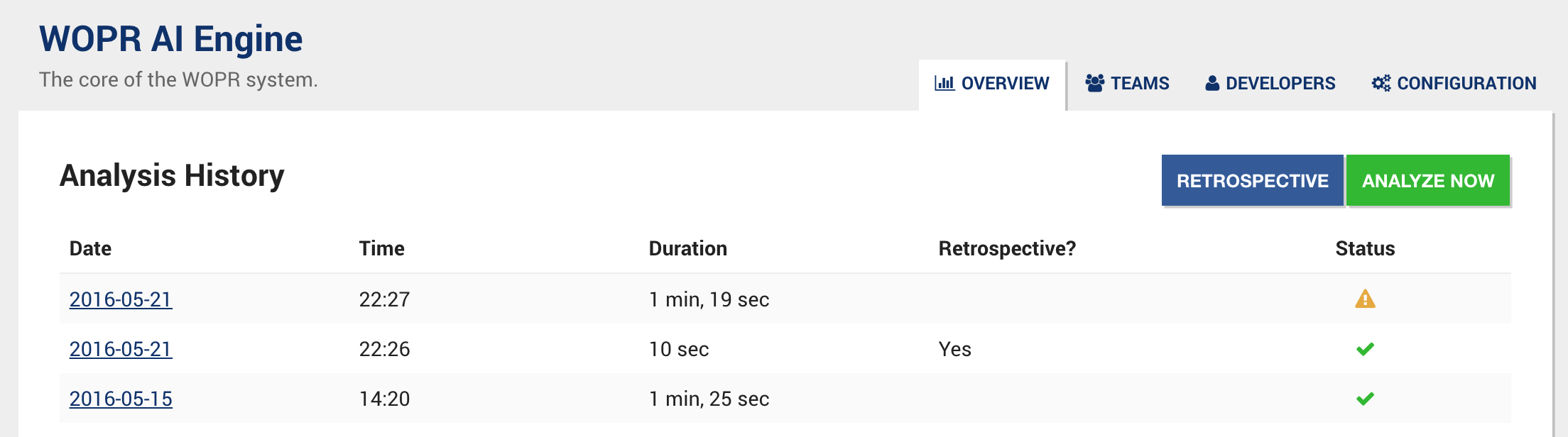

You see the result of a Retrospective analysis in the image above. There’s a lot of data on that dashboard so let’s dig into some of the highlights.

The value of Hotspots in a Retrospective

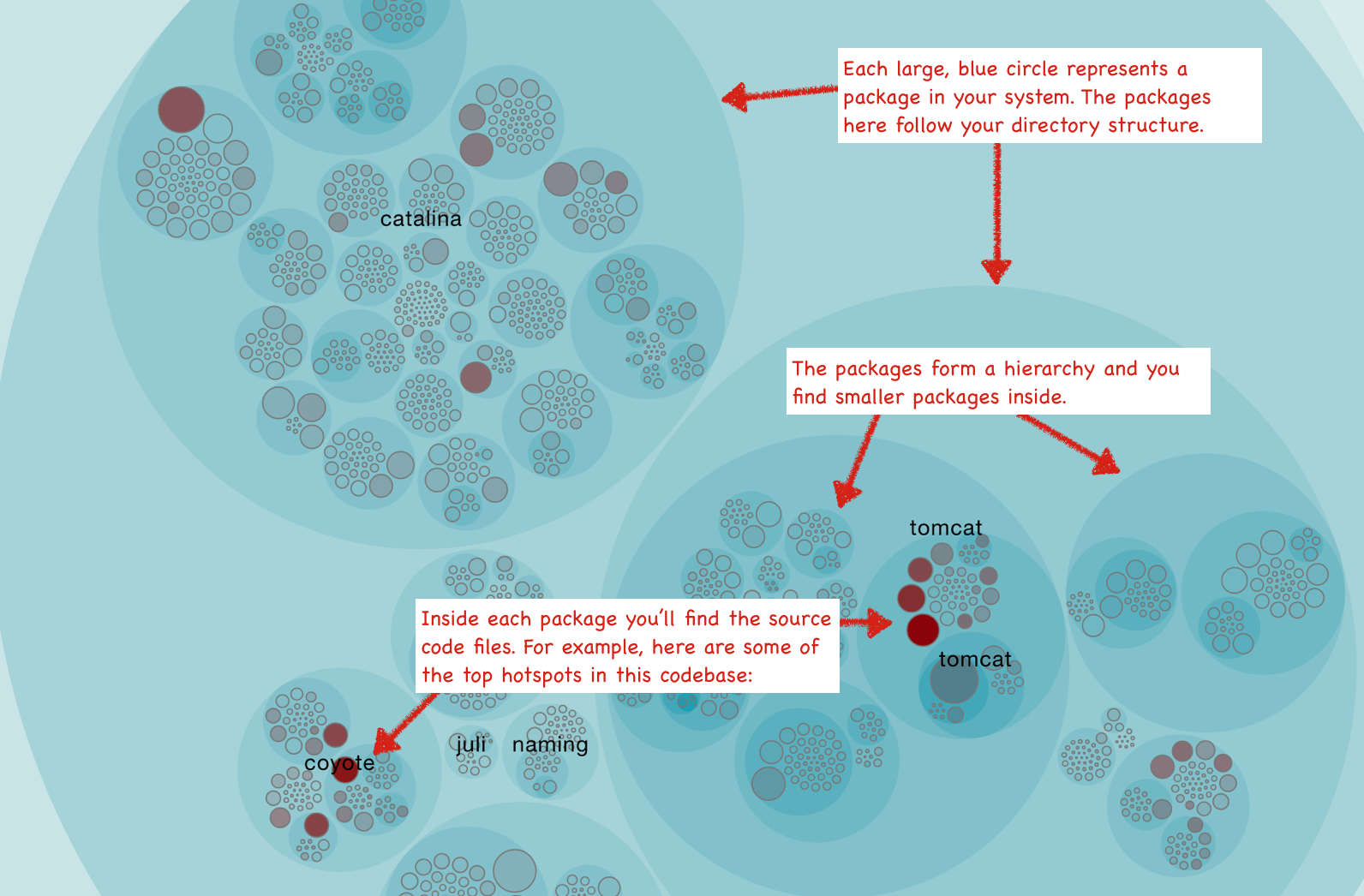

A hotspot analysis visualizes the development activity in your codebase. The more you’ve worked on a piece of code in the sprint, the more opaque and red the corresponding circle becomes.

The hotspot analysis provides an excellent starting point for your retrospective. Gather the team around the interactive hotspot map and see how your recent work affected the code. Your recent stories and features are all fresh in memory and here we capitalize on that. Used this way, a hotspot analysis helps you ask the right questions:

- Did your new features impact isolated parts of the system or did they spread across the entire architecture?

- Are there parts of the code which start to grow complicated?

A hotspot analysis also primes you for the upcoming planning stage of the next iteration. If you see that a new feature impacts a hotspot rich area of the code, you use that information to plan refactorings, improvements or extra testing.

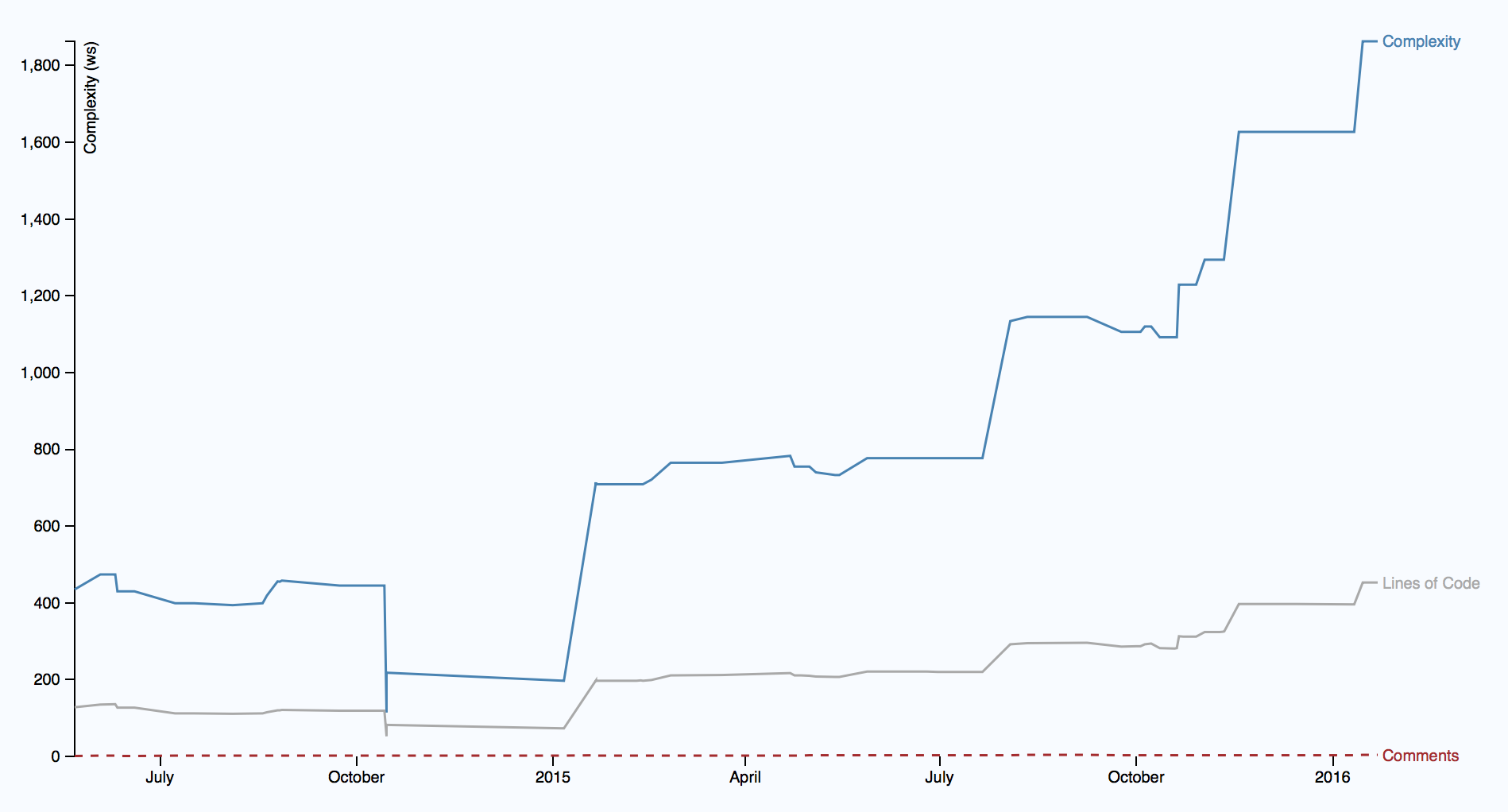

Of course, a hotspot analysis is only a starting point. You can dig deeper by inspecting the complexity trend of each hotspot, evaluate possible temporal couples (different files that tend to change in the same commits) and much more. The most important detections are even automated. You see an example on that in the dashboard above: CodeScene provides an early warning in case the complexity of a piece of code starts to spiral out of control.

Another valuable piece of data are social metrics. These metrics let you evaluate how well your architecture supports the way you work with it. Let’s look at an example.

Balance your architecture and organization

We developers tend to be organized into multiple teams. These teams are typically formed around either feature areas or along the technical axes of your architecture. In some cases a team is just an organizational unit without any relationship to the way the system is designed. This later case is problematic as it will lead to conflicting changes to the code by independent teams. That in turn will increase your coordination overhead, one meeting after another. In addition, you’ll make your software harder to evolve since refactorings and re-designs will now have to be synchronized with the ongoing work of other teams.

Your organization should mean something with respect to how your system evolves. We all know that, but we’ve been limited to subjective reports on how well we succeed. That’s no longer the case. The social aspects of software design are invisible in the code itself, but CodeScene uses more than just the code - we build on version control data - and our tools present organizational and social metrics from they way you’ve worked so far.

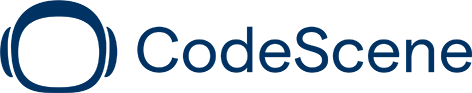

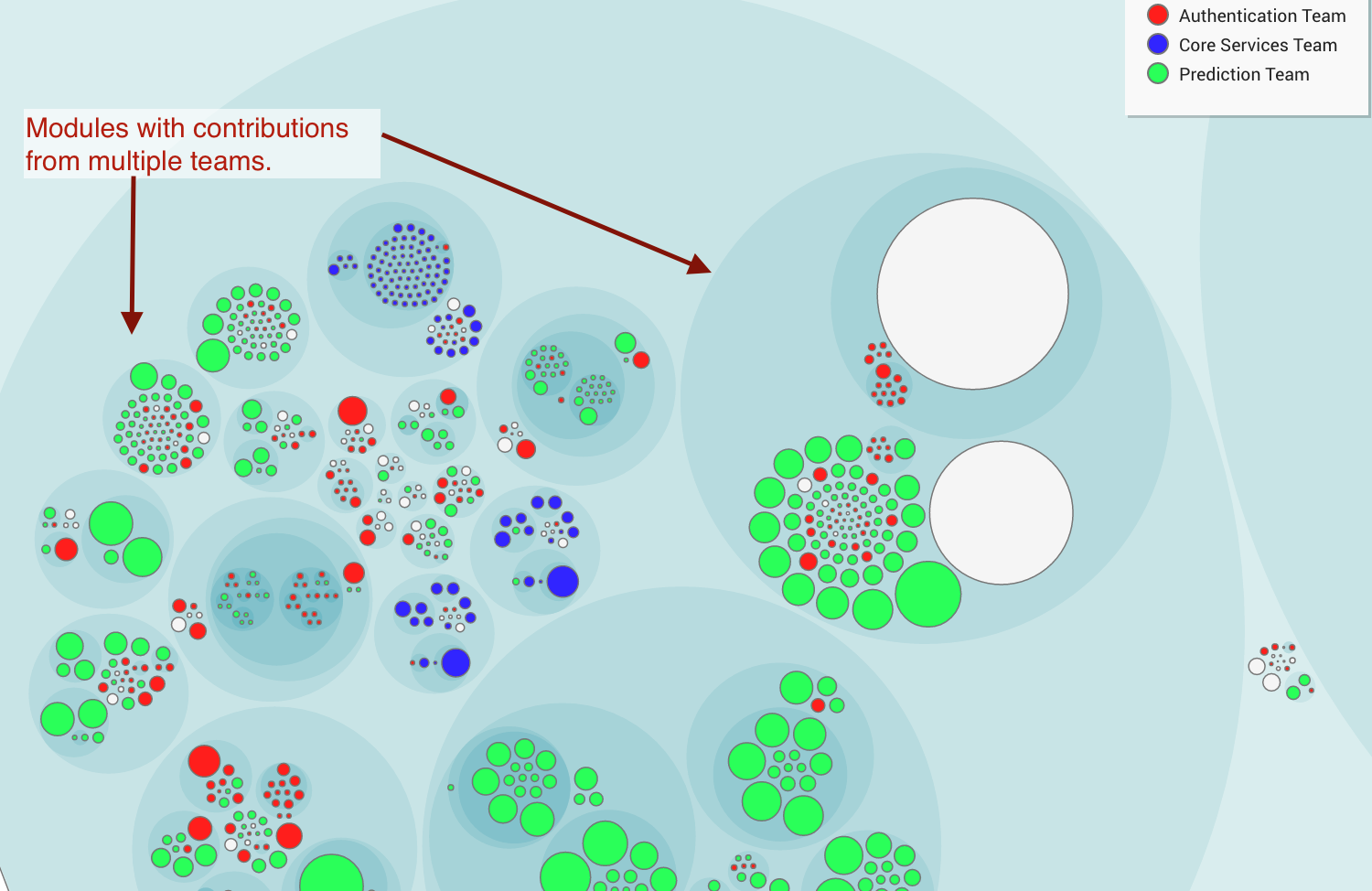

That means we get a second view to inform our retrospectives. A social view, as illustrated in the team knowledge map to your left.

You use the social metrics to evaluate how well your current organization aligns with your architecture. For example, have a look at the team knowledge map. You see a number of sub-systems with contributions from different teams. This pattern hints at expensive coordination needs. In addition, it’s the kind of coordination that leads to incompatible changes and puts you at risk for unexpected feature interactions.

Once you’ve found a potential problem you need to react and get the attention of the other teams. Perhaps the organization lacks a team to take on a shared responsibility? More often you’ll find that code changes for a reason. The reason the modules in the picture above attracts different teams is probably because it has too many responsibilities. Perhaps that sub-system is better of when split into different parts?

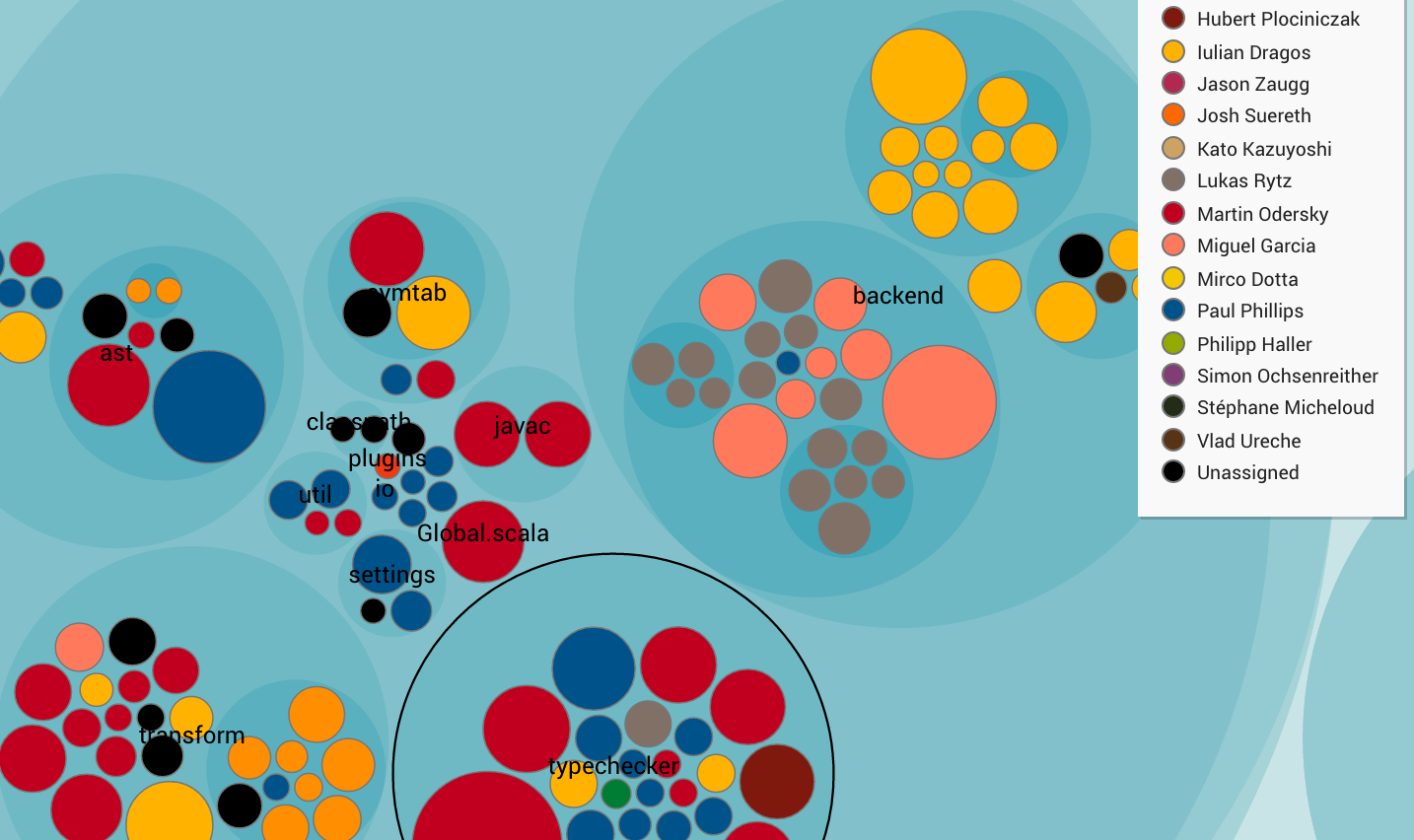

CodeScene also gives you the opportunity to inspect your individual contributions. There are multiple ways to use that knowledge map. For example, use it to discuss the knowledge distribution within your team or look for areas with high intra-team coordination needs.

Support your decisions with data

Once we start to use data on how our system grows we make an important shift. We move away from speculations and reduce a number of social biases in the process. This is important since the traditional format of a retrospective has a number of problems. When you use data to guide your discussions you reduce those biases; It’s hard to argue with data, particularly when it’s your own. Use that information to focus your expertise on where it’s needed the most.